Customer Support is no longer just a cost center - it's been transformed into a powerful experience center where support agents act as brand ambassadors and drivers of customer retention. But the rise of Artificial Intelligence (AI) is changing the CX landscape yet again.

In this blog, we'll dive into how machine-learning models are impacting Quality Assurance and the role of support agents. We'll also explore whether AI is empowering agents or stifling their creativity and discuss the way AI is affecting CX and the part that support agents play in the customer experience journey. With this in mind, we'll share deep insights from a recent CEO/CX webinar featuring MaestroQA CEO Vasu Prathipati.

This will be a good one, so let’s get started.

Why the Push toward AI in a Quality Program

As Quality Assurance Managers continue to be tasked with finding ways to elevate customer service, the question often arises: How can AI help impact quality assurance?

“When we ask customers why they are interested in using AI for their quality program, three responses are typically given,” shared Prathipati:

1. We're only sampling 2% of our interactions – AI can help us to do 100% sampling, providing us with more insight.

2. AI can be used to do sentiment analysis on 100% of interactions, making sentiment analysis more efficient and thorough.

3. Manual QA is expensive and filled with human errors, so AI can be the perfect solution to reduce costs and increase accuracy.

Prathipati suggests taking a more discerning approach when evaluating the potential impacts of AI on QA to help ensure better decision-making. By examining each of the initial reactions mentioned above more thoughtfully, organizations can better understand the implications of their AI-related investments and make informed decisions that align with their long-term goals.

So, let’s start with the first one.

Actionable Insights vs. UnActionable: Which is More Important

“We're only sampling 2% of our interactions – AI can help us to do 100% sampling, providing us with more insight.”

Of course, 100% sounds better because it means more insights.

“But let’s challenge that assumption,” said Prathipati. “Would you rather have actionable insight based on 2% of interactions or unactionable insight based on 100% of tickets?”

The answer for many at this point might be to focus on the 2%, “but what happens when the 2% and the 100% are both actionable, but in different ways?” asked Prathipati. “What if the 2% of actionable insight results in more cost-savings than the 100% of actionable insight?”

One would focus on the 2%, but is that the right choice? “All of a sudden, what matters more than 2% vs.100%, is the word actionable. We need to start spending more time thinking about what is actionable, whether it's through manual or AI-powered insight,” Prathipati pointed out.

This leads us to a discussion on:

AI and Sentiment Analysis: Hype vs. Reality

“AI can be used to do sentiment analysis on 100% of interactions, making sentiment analysis more efficient and thorough.”

In today’s fast-paced environment, the capabilities of AI in QA have high expectations. “We hear promises, said Prathipati, “that this technology can do the whole end-to-end process from detection to root cause analysis. That’s why when speaking with our customers, we ask a series of questions to get a more balanced point of view on what is a right fit and what are reasonable expectations: innovative but reasonable.”

Shifting back to our discussion on using AI to do sentiment analysis on 100% of interactions, Prathipati said, “we want actionable insights. If sentiment is low, how will you identify the ‘why’ it’s low to determine how to take action to improve it? The same rules in our current world still apply in this world of using AI in quality programs. What we hear from our customers is that CSAT is low, so what do we do? We build a DSAT process where we start to look at all the negative CSAT scores. With scorecards, we can do root cause analysis to determine if it’s a people issue, process issue, technical issue, or some other type of issue.”

When it comes to using AI, definitions matter too! Prathipati said, “how we want to define Sentiment. This is the key to actionability. For example, how do we account for customers coming in upset? How are we looking at sentiment where they're already upset? Or are we looking at customers that get upset later in the conversation?”

Determining if it’s agent sentiment or customer sentiment leads to asking yourself: which one of these is even that actionable? If AI is just for Sentiment, will you be able to generate 4X the cost-savings or revenue gain to justify that to your CFO?

“We’ve heard from customers that dig into it, ones that have been burned in the past, that it’s already hard to measure a correlation between CSAT and retention or CSAT and churn or CSAT and growth.” This means that “first we might have to do the hard work to determine do we believe sentiment. Do we have data to show internally that sentiment and churn are really correlated before we can go and invest in AI?”

Critical thinking is needed, recommended Prathipati, so that a company doesn’t end up with something that “sounds good on the surface, but when you get into the details, it doesn’t fulfill your goals or promises.”

Let’s move to the last reason why some push for AI in quality programs.

Manual QA vs. AI: Is AI the Silver Bullet?

“Manual QA is expensive and filled with human errors, so AI can be the perfect solution to reduce costs and increase accuracy.”

One of the last common reasons for the shift to AI is the manual and expensive process of QA, which some feel can be filled with human bias. Hence, the perception that AI should be the perfect solution.

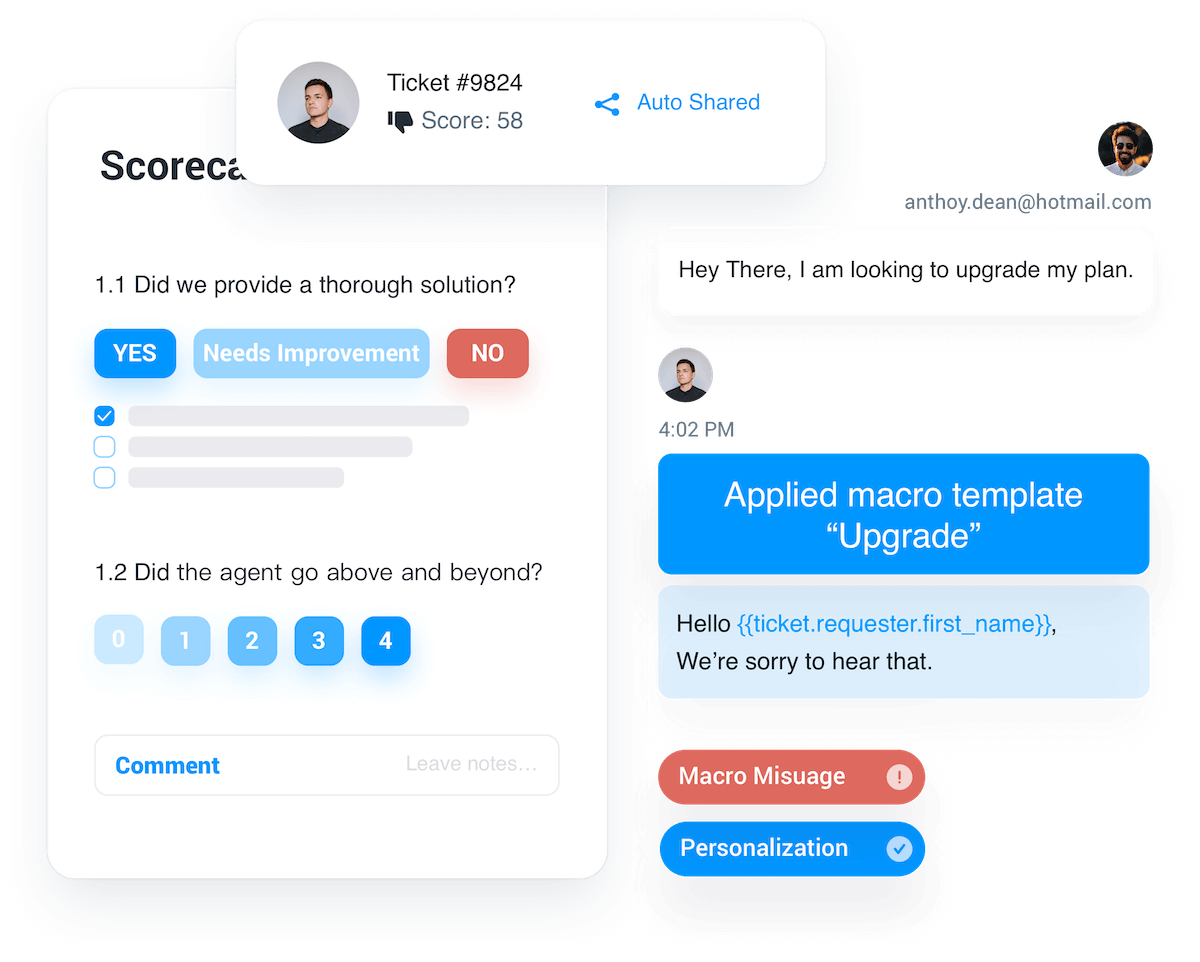

Today, “some feel that AI is the silver bullet, and they have this great vision for it, but pairing that vision with reality hasn't been fully thought through, “ shared Prathipati. “Some of the questions that we ask our customers after reviewing their current scorecards revolve around the sections of those scorecards; we want to know which are the hard skills and soft skills. 99% of our customers have hard and soft skills in their scorecards.”

"Whether they are looking at other QA software vendors or us, we ask: what are vendors showing you auto-scoring around, and what will you do about the parts they aren’t covering? Most typically, we see use cases that are soft skills based." What’s interesting in this finding, as Prathipati pointed out, is that troubleshooting or probing questions come from the hard skills section, but it’s this section where you can save time and impact metrics like first call resolution or reduce total time to resolution." That’s why it’s important to consider the coverage when considering AI for this. "There’s a role for AI, but it’s not the silver bullet for everything." The goal, said Prathipati, is to think about "how they should be coupled together" so you can "really create a dynamic and exciting quality program."

What about using AI for auto-grading?

“So many of our customers use quality programs where they're not only reviewing the conversation in a Zendesk or Salesforce in their case management system or listening to the call. There are a lot of back-end systems, so they must check to see if the case or issue was resolved properly. This requires going into your internal tooling. And that's a whole different beast,” said Prathipati, “How do you make sure you're accounting for that type of deep work when you're thinking about auto-grading? From our research, that's a whole different set of work. And so when we start to unpack this with customers, we can help our customers with this amazing vision that we still want to hold on to, but bringing it down so that we get the best of both worlds: the great work we're doing as people, paired with the great work of technology.”

AI In Quality Assurance: The Wrap-Up

In the end, Prathipati said, “we’ve talked about three common perceptions of AI and why people are thinking about AI in their quality program, but when you start to pull back the layers, we try to take the conversation to the next level, there’s a lot of hype for AI, but it’s really important not to look at your quality program just as a cost, but to look at the current value that it’s delivering. So much so, “that when you invest in a technology such as AI, is it clearing that bar? It needs to be delivering more value than your current quality program. So it’s a one plus one equals three situation.”

If you would like to learn more about MaestroQA, request a demo today.

.jpg)

.jpeg)

.jpeg)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.avif)

.avif)

%2520(1)-p-800.avif)

.avif)

-p-500.avif)

%2520(1).avif)

.avif)

.avif)

%2520(1).avif)

.avif)

%2520(1).avif)

%2520(1).avif)

%2520(1).avif)

.avif)

%2520(1).avif)

%2520(1).avif)

.avif)

%2520(1).avif)

.avif)

.webp)

.jpeg)

.jpeg)

.jpeg)

.jpeg)

.avif)

.jpeg)

.jpeg)

.jpeg)

.avif)

.avif)

.avif)